Speech Recognition Systems

Rp500,000 Rp99,000

- Description

- Unit Outline

- Instructor

- Additional information

- Certificate

- Reviews (0)

Description

About this course

This course is part of the Microsoft Professional Program in Artificial Intelligence.

Developing and understanding Automatic Speech Recognition (ASR) systems is an inter-disciplinary activity, taking expertise in linguistics, computer science, mathematics, and electrical engineering.

When a human speaks a word, they cause their voice to make a time-varying pattern of sounds. These sounds are waves of pressure that propagate through the air. The sounds are captured by a sensor, such as a microphone or microphone array, and turned into a sequence of numbers representing the pressure change over time. The automatic speech recognition system converts this time-pressure signal into a time-frequency-energy signal. It has been trained on a curated set of labeled speech sounds, and labels the sounds it is presented with. These acoustic labels are combined with a model of word pronunciation and a model of word sequences, to create a textual representation of what was said.

Instead of exploring one part of this process deeply, this course is designed to give an overview of the components of a modern ASR system. In each lecture, we describe a component’s purpose and general structure. In each lab, the student creates a functioning block of the system. At the end of the course, we will have built a speech recognition system almost entirely out of Python code.

What you’ll learn

- Fundamentals of Speech Recognition

- Basic Signal Processing for Speech Recognition

- Acoustic Modeling and Labeling

- Common Algorithms for Language Modeling

- Decoding Acoustic Features into Speech

Prerequisites

- Some python experience

- Basic Machine Learning principles

- Knowledge of probability and statistics

Estimate Time : 20-24 hours

Module 1 Background and Fundamental Theory

- Fundamental Theory

- Phonetics

- Performance Metrics

- Other Considerations

- Lab

Module 2 Speech Signal Processing

- Feature Extraction

- Mel Flitering

- Log Compression

- Feature Normalization

- Lab

Module 3 Acoustic Modeling

- Markov Chains

- Problem with Markov Chains

- Hidden Markov Models

- Deep Neural Network Acoustic Models

- Training Feedforward Deep Neural Networks

- Using Sequence Based Objective Function

- Lab

Module 4 Language Modeling

- N Gram Models

- Language Model Evaluation

- Operations on Language Models

- Advance LM Topics

- Lab

Module 5 Speech Decoding

- Weighted Finite State Transducers

- WFSTs and Acceptors

- Graph Composition

- Lab

Module 6 Advance Acoustic Modeling Techniques

- Improved Objective Functions

- Sequential Objective Function

- Connectionsit Temporal Classification

- Sequence Discriminative Objective Functions

- Lab

Adrian Leven

Content Developer

Microsoft Corporation

Adrian Leven is a Content Developer at Microsoft Learning with a focus on Human-Computer Interaction. He received his B.S. In Computer Science from Stanford University.

Additional information

| Author / Publisher | Microsoft |

|---|---|

| Level | Beginner, Intermediate |

| Language | English |

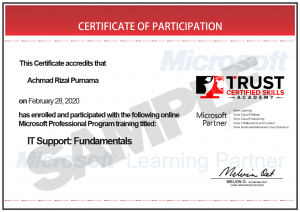

Certificate

Only logged in customers who have purchased this product may leave a review.

Reviews

There are no reviews yet.